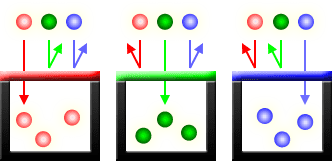

On April 8th, 2024, a total solar eclipse will sweep across North America, from Mexico to the Maine-Canadian border. For those who experienced the spectacular solar eclipse of 2017, this one will be similar, crossing the United States from west to east and passing...

Everyone has a digital camera on their phone these days, but you, the aspiring photographer, might be thinking it’s time to summon the courage and upgrade to a higher-end camera. But if you browse the cameras on Amazon, you might notice that with higher quality comes more and more specs – odd strings of letters and numbers that make no sense to you. “What in all heck do ISO, megapixels, and f-stop mean?” you ask. Then, you head to a store to try one out. You gravitate towards one that’s within your price range, you pick it up, and you snap a picture. But as you press down on the shutter button, you hear a series of clicks and whirs. “I thought this thing was digital! What are all of those sounds?” you continue wondering. Then, you suddenly realize you know a lot less about how cameras work than you thought.

That’s okay. I started out that way too. A camera’s mechanics are rather complex, and the way they translate all of those odd sounds and numbers into pictures involve some pretty hefty engineering. In this two-part series, I’ll try to take the mystery out of cameras and explain how they work, from lens to film and photosensor. Follow me as I travel through a single lens reflex (SLR) camera, the kind of camera used by most professional photographers today.

The Mechanics of an SLR Camera

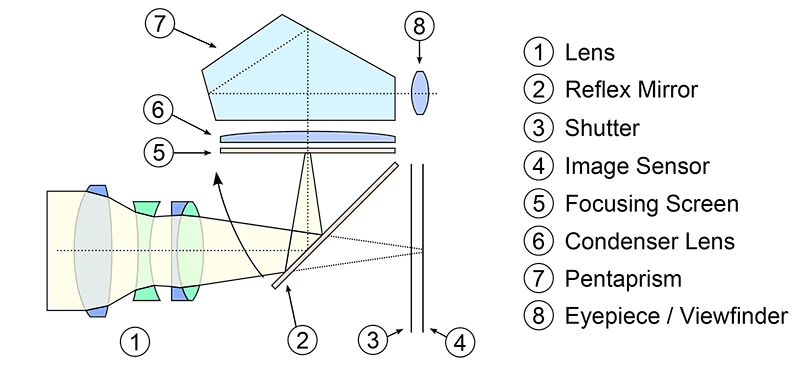

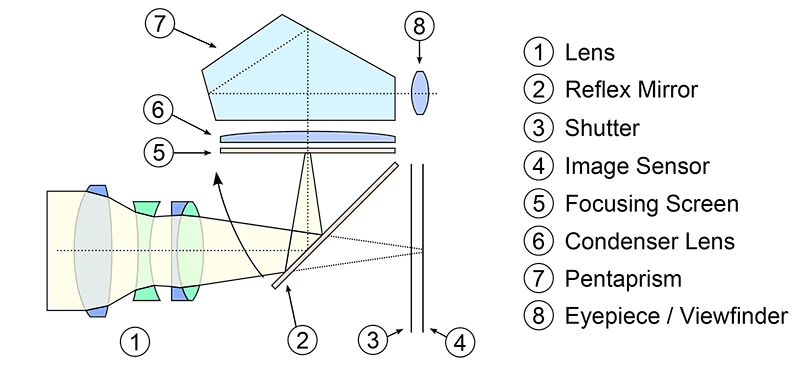

All SLRs capture images the same way, whether the image emblazons itself on film or encodes itself on a memory card. As I explain the anatomy of a camera, use the diagram below as your guide:

A cross-section of a SLR camera. Image courtesy of photographylife.com

It all starts with the lens (1). When light enters the lens, it passes through and hits a mirror (2) that’s sitting across the way at a 45 degree angle. The mirror directs the light upwards towards a compartment called a pentaprism (7), where it bounces around a bit until it finds its way to the viewfinder in your camera (8) – letting you see what the lens is pointing at. (Why a pentaprism and not another mirror? The mirror would deliver an upside down image to your viewfinder, while a pentaprism keeps it upright.)

When you’re ready to snap a picture and hit the button, the mirror swings upward and blocks light from entering the viewfinder, while at the same time, opening up a tunnel that allows light to pass straight through the lens, through the now-open shutter (3), and onto the capture medium (4), whether it’s film (in a traditional camera) or photosensor (in a digital camera). It’s a very simple setup of light and mirrors.

While the mechanics of a SLR camera are fairly straightforward, this is where the similarities between film and digital SLR camera ends.

How Images are Captured on Film

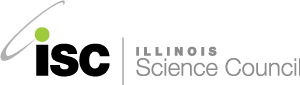

Traditional cameras capture images on film, which is made of a plastic sheet covered with a layer of gelatin (the same stuff that’s in gummy bears). The gelatin contains light-sensitive crystals made of a chemical called silver halide (see diagram below). Silver halide is what it sounds like: an atom of silver bound to a halide.

Black and white film contains one layer of silver halide crystals: when light hits the crystals it causes the silver and the halide to break apart, leaving behind metallic silver. The metallic silver makes the film turn dark when it’s developed. Color film contains multiple layers of silver halide, and each layer is sensitive to a different color of light.

Generally speaking, the size of the film affects the quality of your image – the bigger the film is, the more detail you can get. Old reel-to-reel movie cameras used 8mm film, a typical film SLR camera uses 35mm film, and IMAX cameras use 70mm film!

Photosensors: The “Film” of Digital Cameras

Photo cavities or “photosites.” These are lined up on a 2-dimensional array and collect light. From Cambridge in Colour.

Like traditional film, a digital SLR’s photosensor, also known as an image sensor, is sensitive to light. But instead of using silver halide crystals to capture light, it uses a field of thousands of microscopic cavities called photosites. Each photosite is like a cubicle at an office, where data is analyzed and interpreted. When you open the camera shutter to expose the photosites to light, each one collects photons (light particles). When the shutter closes, the photosites get covered up, which gives each site a chance to analyze the light it took in. The amount of light particles the photosite takes in translates into the size of the electrical signal it sends into the camera, and ultimately, the brightness of each spot on the final image.

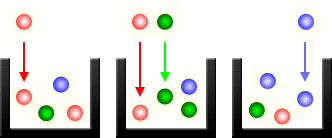

Simple enough, right? But at this point, the photosites can’t distinguish one color of light from another. To a photosensor, light is light; a photon is a photon. Without any more information than this, all you’d get is black and white images.

How does a digital camera capture color images, then?

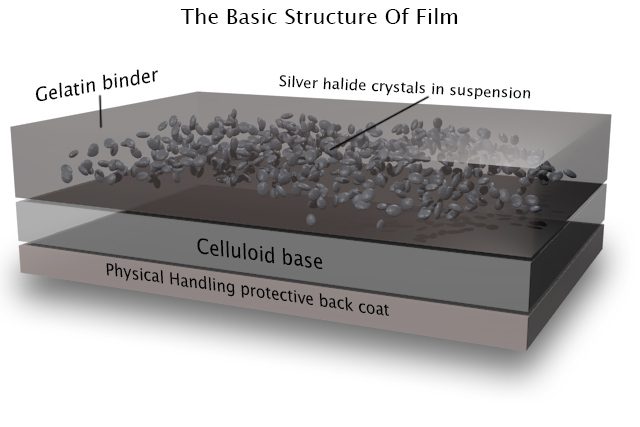

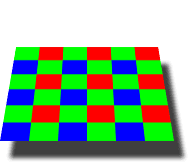

To capture color photographs, the image sensor in a DSLR is typically covered by what’s a called a Bayer filter. This is a light filter that lays on top of the photosensor and, like a stained glass window in the ceiling of a room, only lets one color into each photosite. A Bayer filter, in particular, is a pretty simple design: it lets red light into a quarter of the photosites, blue into another quarter, and green into the remaining half, in a beautifully patterned array (see below). The photosites know which color they’re supposed to receive, so when light hits each one, the camera is able to calculate the levels of each color individually.

A Bayer Filter Array. From Cambridge in Colour.

You may be asking, “if each photosite only collects either red, green, or blue, then how can a camera produce a photograph with more than just those colors?” Well, I know I said the camera measures the amount of light in each photosite individually, but to determine the true color of the light, it actually looks at multiple photosites at one time. Specifically, it analyzes a 2×2 square of photosites: it compares the relative levels of red, green, and blue among them, performs some calculations, and translates this information into the true color of the image. This 2×2 square forms a single pixel.

Pixels Aren’t Everything

When point-and-shoot digital cameras first came out, digital camera manufacturers boasted about the number of megapixels their cameras contained, because the number of megapixels determines the resolution of the resulting photograph. Indeed, with more megapixels, a photographer can collect more information from the light it captures and print up larger photographs without worrying that they’ll see a “pixelated” image.

But a large number of megapixels does not necessarily guarantee a high-quality image. Image quality also depends on how big the pixels are. The more megapixels a manufacturers tries to pack onto a photosensor, the smaller the individual photosites have to become so they can all fit. If the photosites are too small, they cannot capture enough light to produce a quality image.

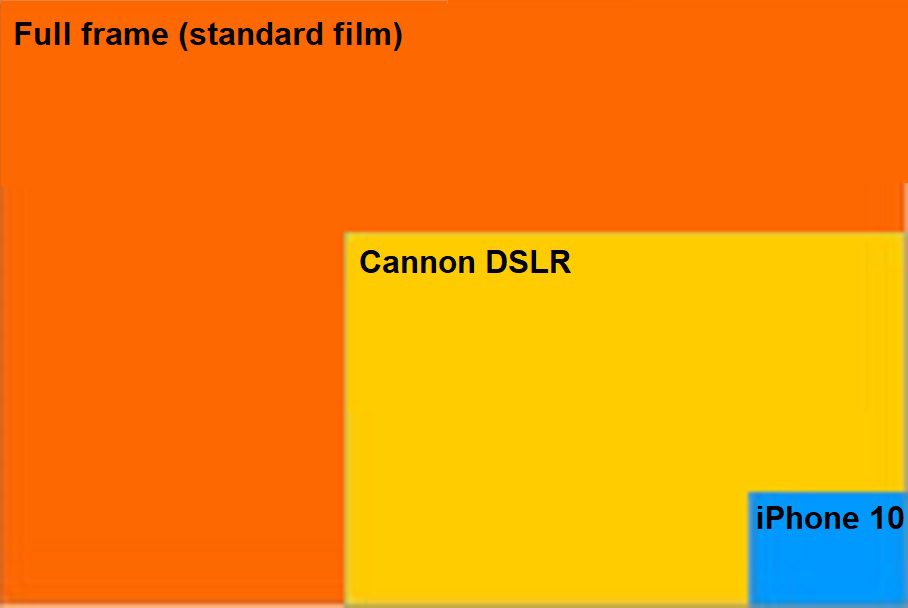

Size comparison of the image sensor in a standard film camera, a Canon Rebel DSLR camera, and an iPhone 10.

The iPhone X and the Canon Rebel T3 DSLR camera, for instance, both contain 12 megapixels, but they produce images of vastly different quality. Why? The photosensors in an iPhone are only about 6×5 mm in area; meanwhile, the Canon Rebel’s sensor is about 22×15 mm in area, over 10 times as big (see the diagram on the right). For the same amount of photosites to fit on each sensor, those on the iPhone sensor have to be 10 times smaller than their counterparts in the Canon camera. This means the iPhone sensor captures 10 times less light. It’s only with a large photosensor, which leaves room for larger photosites, that a camera can make good use of the megapixels it contains.

The only time you can control the megapixel number and size of your image sensor is when you buy your camera or phone. Once you choose a camera to purchase, you’ve decided how much your camera can help you produce a quality image. But there are a lot more factors that go into taking a good photograph, beyond the capabilities of you camera. The rest is up to you.

In Part II, I describe the features of a SLR camera that you can control, which will help you capture a beautiful photograph.