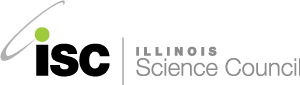

On April 8th, 2024, a total solar eclipse will sweep across North America, from Mexico to the Maine-Canadian border. For those who experienced the spectacular solar eclipse of 2017, this one will be similar, crossing the United States from west to east and passing...

A bionic woman trains her robotic ear to recognize the sound of footsteps two blocks away. A man taps on a holographic screen to view a recording of his own memory. A scientist puts their finger to their temple to mentally command an army of robots.

You might recognize those scenes from popular science fiction, but technologies that can literally read our minds now exist in early forms, thanks to brain research. The nearly 1 in 5 people who have physical disabilities could benefit from devices like these to help them move their artificial limbs using just their thoughts. And as the field of futuristic research develops, we might see cool, new technologies that can improve anyone’s life. But low participation in medical research is preventing progress. Although 57% of Americans believe that it is important for everyone to take part in clinical trials, fewer than 16% have ever done so. More volunteers with and without disabilities are needed to fine-tune these technologies that depend on Brain-Machine Interfaces (BMI).

A BMI is a device that turns brain activity into information that a computer can read. Your brain is made up of cells called neurons that talk with each other using electrical signals. BMIs tap into those electrical signals and measure them. These electrical signals carry lots of different information, from visual information telling you that there is an adorable dog across the street, to motor information telling your hands to pet that adorable dog.

Just by thinking about petting a dog, a person with BMI technology could command a mechanical arm to do the petting. Each part of the brain is highly specialized, so the location of the signal in the brain is key to figuring out the meaning. By determining which neurons are signaling and where, these devices can interpret what the signal means. From there, the device can transmit the signal to a machine that can do something with that information. This technology can also stimulate different parts of the brain, like the area that recognizes smells, so that someone thinks they’re smelling fresh flowers instead of wet dog.

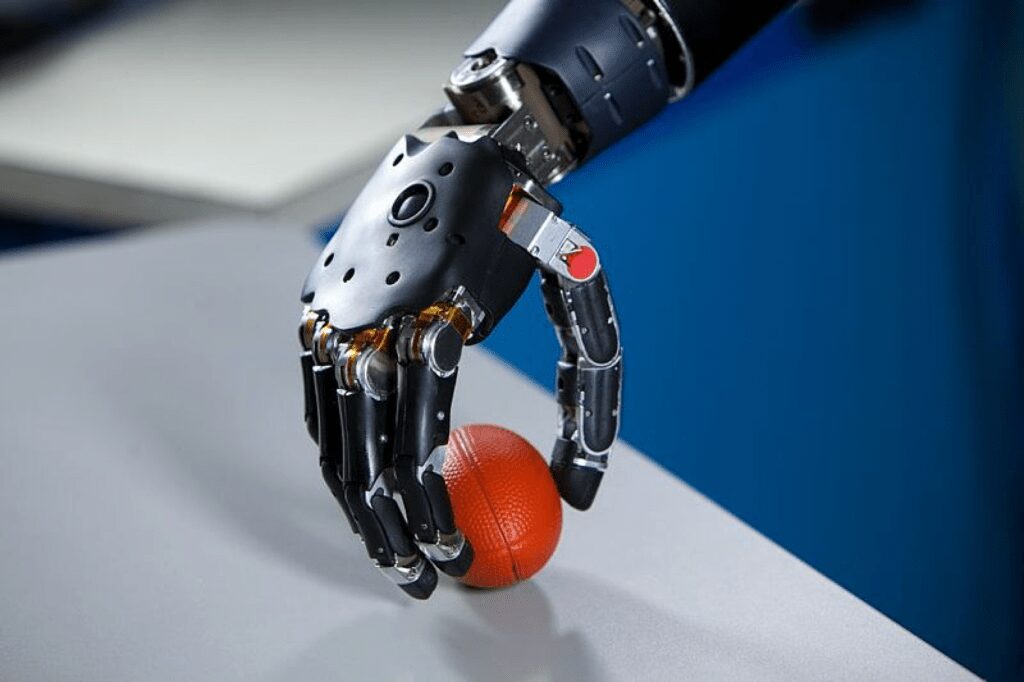

Researchers are now using BMIs to create mechanical limbs that can be moved just by thinking about it. Dr. Nicholas Hatsopoulos at the University of Chicago has done research into using BMIs to help amputees move their prosthetic limbs. These prosthetic limbs receive electrical signals from the brain and move just like flesh-and-blood limbs do. This technology can even stimulate the part of the brain that controls touch allowing people to feel themselves moving that limb. The neurons that signal to move that limb are still largely the same after an amputation, and when someone thinks about moving the amputated limb, the neurons fire even though there’s no limb to move.

But figuring out which neurons are responsible for moving a limb requires knowing which neurons signal when the limb is moved. For people missing a limb, there’s no way to be sure that the signal is correct, because you can’t see whether the arm moves when the neurons activate. Dr. Hatsopoulos used BMIs to solve this problem, too.

He teamed up with able-bodied people to study BMIs and neurons. His research team implanted a BMI that read random neurons and found that people could control the machine with their brains after they had enough training and practice.

The neurons fully rewired themselves to accommodate the machine!

Understanding how the brain adapts and how neurons rewire allowed Dr. Hatsopoulos to refine the technology and design a functional prosthetic for people born without a limb. And he couldn’t have done it without the help of volunteers both with and without disabilities.

BMIs have also been used to create speech for people who can’t speak due to paralysis or an advanced brain disease. Typically, these individuals use devices that measure tiny movements to select letters and generate speech from the typed words, but these devices are incredibly slow and frustrating. Dr. Nima Mesgrani at Columbia University is working on a technology that would read the activity in the part of the brain that controls speech and determine what they were thinking about saying, distinguishing the speech from normal thought and allowing the machine to speak for them.

Currently, the technology has only been used experimentally, and again, human volunteers who were not experiencing speech difficulties were invaluable to the findings as control subjects. The subjects spoke sentences aloud while wearing special sensors, and then those sentences were recreated by a computer. Other volunteers were able to understand the sentence about 70% of the time. There is still much work to be done before this technology can be used by people with disabilities, but as more research is done, hopefully this technology will become even more accurate.

BMI technology could change lives, but progress in this area is dependent on participation from volunteers, regardless of their ability status. Researchers like Dr. Hatsopoulos and Dr. Mesgrani, and their teams, have only been able to accomplish what they have because of the people who chose to participate in their research studies and helped them test their machines. With your support, we can accelerate the development of technologies to assist people with physical disabilities and bring brain-machine interfacing from science fiction into reality. Check out these links to find ways to get involved in clinical trials and research.

Maggie Colton is a 4th year undergraduate at the University of Chicago studying cancer biology. When she’s not in the lab, she enjoys baking and playing her tuba.

Maggie Colton is a 4th year undergraduate at the University of Chicago studying cancer biology. When she’s not in the lab, she enjoys baking and playing her tuba.

Maggie’s article is part of a collaboration between the Illinois Science Council and the University of Chicago.